CNCF leadership used the keynote to argue that only a few months ago, teams were still asking what inference was. Now the question is how to scale it. Efficientlyconnected That's a fast shift. And it tells you something about where the next operational pressure lands.

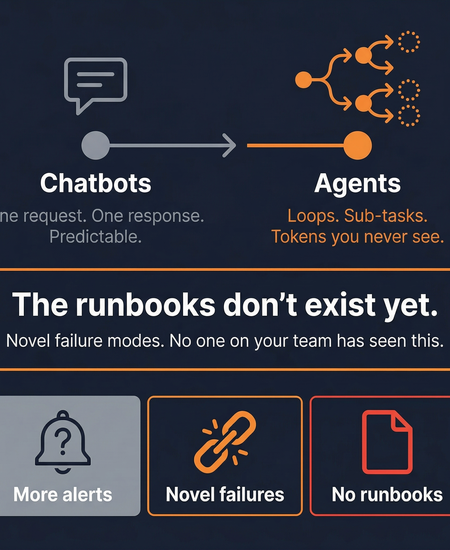

Agents are the scale event. Chatbots were predictable - one request in, one response out. Agents run in loops, spawn sub-tasks, consume tokens on internal reasoning steps you never see. The operational profile is completely different.

I've been thinking about what this means for platform engineering teams. More AI agents in production means more alert volume, more novel failure modes, and fewer people on your team who've seen this class of problem before. The runbooks don't exist yet.

The first challenge every organization faces when adopting agentic AI is establishing control - agents, MCP tools, prompts, and agent skills proliferate across teams with no centralized visibility or governance. GlobeNewswire That's the tooling problem. But there's an equally hard problem behind it: when these systems fail, who investigates?

The trend from Amsterdam is clear. AI is moving into Kubernetes infrastructure, not alongside it. The teams who figure out how to operate it - not just deploy it - will have the real advantage.

Source used: "Kubernetes Becomes the Control Plane for AI Infrastructure" - EfficientlyConnected.com, March 30, 2026 + "Solo.io Introduces agentevals" - GlobeNewswire, March 25, 2026

Subscribe to our email newsletter and unlock access to members-only content and exclusive updates.

Comments